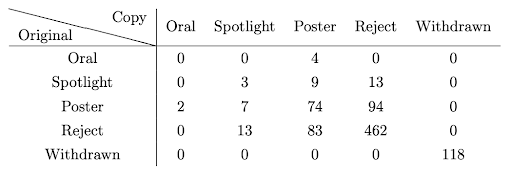

In 2014, 49.5% of the papers accepted by the first committee were rejected by the second (with a fairly wide confidence interval as the experiment included only 116 papers). This year, this number was 50.6%. We can also look at the probability that a randomly chosen rejected paper would have been accepted if it were re-reviewed. This number was 14.9% this year, compared to 17.5% in 2014.

Feedback from ACs and SACs

After the review period ended and decisions were released, we gave ACs and SACs who were assigned to duplicated papers (in which there had been disagreement) access to the reviews and discussion for the papers’ other copies. We asked them to complete a brief survey to provide feedback. Of the 203 papers that were recommended for acceptance by only one committee, we received feedback on 99. Unfortunately, we received feedback from both committees for only 18 papers, which limits the scope of our analysis.

Based on this feedback, the vast majority of cases fell into one of three categories. First, there is what one AC called “noise on the decision frontier.” In such cases, there was no real disagreement, but one committee may have been feeling a bit more generous or more excited about the work and willing to overlook the paper’s limitations. Indeed, 48% of multiple-choice responses were “This was a borderline paper that could have gone either way; there was no real disagreement between the committees.”

Second, there were genuine disagreements about the value of the contribution or the severity of limitations. We saw a spectrum here ranging from basically borderline cases to a few more difficult cases in which expert reviewers disagreed. In some of these cases, there was also disagreement within committees.

Third were cases in which one committee found a significant issue that the other did not. Such issues included, for example, close prior work, incorrect proofs, and methodological flaws.

45% of responses were “I still stand by our committee’s decision,” while the remaining 7% were “I believe the other committee made the right decision.” We can only speculate about why this may be the case. Part of this could be that, once formed, opinions are hard to change. Part of it is that many of these papers are borderline, and different borderline papers just fundamentally appeal to different people. Part of it could also be selection bias; the ACs and SACs who took the time to respond to our survey may have been more diligent and involved during the review process as well, leading to better decisions.

Limitations

There are two caveats we would like to call out that may impact these results.

First, although we asked authors of duplicated papers to respond to the two sets of reviews independently, there is evidence that some authors put significantly more effort into their responses for the copy that they felt was more likely to be accepted. In fact, some authors told us directly that they were only going to spend the time to write a detailed response for the copy of their paper with the higher scores. Overall, there were 50 pairs of papers where authors only left comments on the copy with the higher average score.

To dig into this a bit more, we had 8,765 papers still under review at the time initial reviews were released. The acceptance rate for the 7,883 papers not in the experiment was 2036/7883 = 25.8%. (Note that the overall acceptance rate for the conference was 25.6%, but this overall rate also includes papers that were withdrawn or rejected for violations of the CFP prior to initial reviews being released—here we are looking only at papers still under review at this point.) As discussed above, the average acceptance rate for duplicated papers was 22.7% (206 papers recommended for acceptance in the original set and 195 papers recommended in the duplicate set, for 401 acceptances out of the total of 882*2 papers). The 95% binomial confidence intervals for the two observed rates do not overlap. Authors changing their behavior may account for this difference. This confounder may have somewhat skewed the results of the experiment.

Second, when decisions shifted as part of the calibration process, ACs were often asked to edit their meta-reviews to move a paper from “poster” to “reject” or vice versa, or from “spotlight” to “poster” or vice versa. We observed several cases in which ACs made these changes without altering the field for whether a paper “can be bumped up” or “can be bumped down.” For example, there were nine cases in which it appears that a duplicated paper was initially marked “poster” and “can be bumped down” and later moved to “reject,” ending up marked as the nonsensical “reject” and “can be bumped down.” This could potentially introduce minor inaccuracies into our analysis of shifted thresholds.

Key Takeaways

The experimental results appear consistent with the 2014 experiment when the conference was an order of magnitude smaller. Thus there is no evidence that the decision process has become more or less noisy with increasing scale.

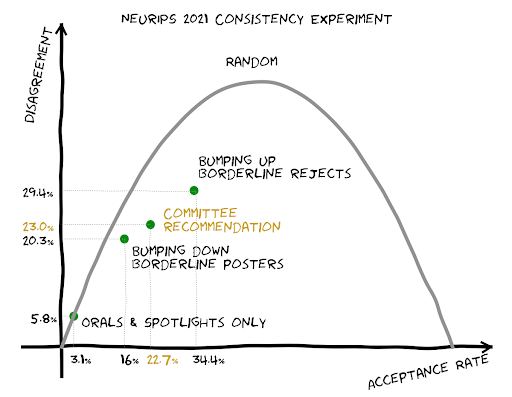

For program chairs, there is a perennial question: “How selective should the conference be?” With the current review process, it appears that being significantly more selective will significantly increase the arbitrariness (i.e., fraction of accepted papers with a different decision upon rereview). However, increasing the acceptance rate may not decrease the arbitrariness appreciably.

Finally, we would encourage authors to avoid excessive discouragement from rejections as there is a real possibility that the result says more about the review process than the paper.

Acknowledgments

We would like to thank the entire OpenReview team, especially Melisa Bok, for their support with the experiment. We also thank the reviewers, ACs, and SACs who contributed their time to the review process, and all of the authors who submitted to NeurIPS.